California AI Bill: Crucial SB 53 Faces Uncertain Veto from Newsom

BitcoinWorld

California AI Bill: Crucial SB 53 Faces Uncertain Veto from Newsom

The digital frontier is rapidly evolving, and with it, the urgent need for robust governance. For those in the cryptocurrency space, understanding the broader regulatory landscape for emerging technologies like Artificial Intelligence (AI) is paramount, as these areas often intersect. A recent development from the Golden State has sent ripples through the tech world: the passage of the California AI bill, SB 53. This legislation aims to introduce significant changes to how large AI companies operate, but its future remains in the hands of Governor Gavin Newsom, creating a period of considerable uncertainty.

What is SB 53 and Why is This California AI Bill So Significant?

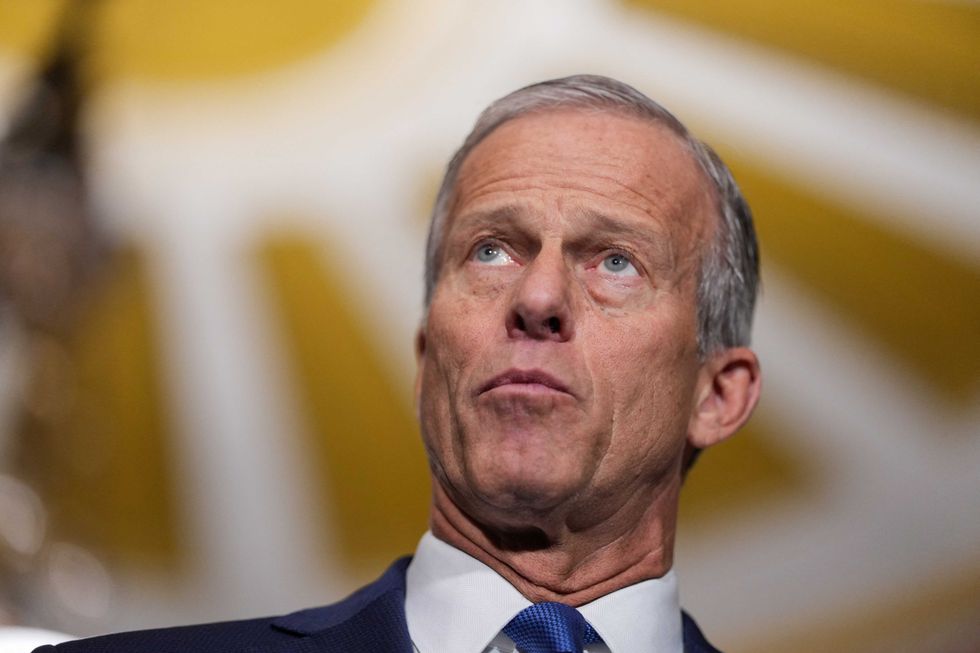

California’s state senate recently gave its final approval to SB 53, a landmark piece of legislation focused on AI safety. Authored by state senator Scott Wiener, the bill seeks to establish new transparency requirements for major AI developers. Wiener describes SB 53 as a measure that “requires large AI labs to be transparent about their safety protocols, creates whistleblower protections for [employees] at AI labs & creates a public cloud to expand compute access (CalCompute).”

This bill is significant because California is a global hub for technological innovation. Any AI safety regulation enacted here could set a precedent for other states and even federal policy. The legislation touches on several critical areas:

- Transparency: Large AI labs would need to disclose their safety protocols. This aims to provide greater insight into how powerful AI models are developed and deployed.

- Whistleblower Protections: Employees at AI labs would receive protections, encouraging them to report safety concerns without fear of retaliation.

- CalCompute: The bill proposes creating a public cloud to expand compute access, potentially democratizing AI development and research.

The core objective is to balance the rapid advancement of AI with the need to mitigate potential risks, ensuring responsible development and deployment of this transformative technology.

Gavin Newsom AI Stance: A History of Caution and Concern

The fate of SB 53 now rests with Governor Gavin Newsom. His decision is keenly awaited, especially given his past actions regarding AI legislation. Last year, Newsom vetoed a more expansive AI safety bill, also authored by Senator Wiener. While acknowledging the importance of “protecting the public from real threats posed by this technology,” Newsom criticized the previous bill for applying “stringent standards” to large models regardless of their deployment context or data sensitivity. He instead signed narrower legislation targeting specific issues like deepfakes.

This history highlights the nuanced approach Governor Newsom has taken toward AI regulation. He is clearly aware of the technology’s risks but also cautious about imposing overly broad or potentially stifling regulations on innovation. Senator Wiener has stated that the current SB 53 was influenced by recommendations from an AI expert panel convened by Newsom himself after his prior veto, suggesting a more tailored and considered approach this time around. The question remains: will this revised bill meet his approval, or will concerns about its scope still lead to a veto?

Industry Reactions to California’s Tech Policy AI Initiatives

The prospect of new tech policy AI in California has elicited strong reactions across Silicon Valley. The industry is divided, reflecting the complex challenges of regulating a rapidly evolving field.

Opposition from Giants: OpenAI and Andreessen Horowitz

A number of prominent Silicon Valley companies, venture capital (VC) firms, and lobbying groups have voiced opposition to SB 53. OpenAI, while not specifically mentioning SB 53 in a recent letter to Newsom, argued for regulatory harmony. They suggested that companies meeting federal or European AI safety standards should be considered compliant with statewide rules, to avoid “duplication and inconsistencies.” This stance underscores a preference for unified, potentially less fragmented, regulatory frameworks.

Andreessen Horowitz (a16z), a major VC firm, has also been vocal. Their head of AI policy and chief legal officer recently claimed that “many of today’s state AI bills — like proposals in California and New York — risk” violating constitutional limits on how states can regulate interstate commerce. This argument raises a fundamental legal challenge to state-level AI regulation, suggesting that such laws could overstep their bounds by impacting companies operating across state lines. The firm’s co-founders have even linked tech regulation to their political leanings, advocating for a 10-year ban on state AI regulation, aligning with some positions taken by the Trump administration.

Support from Anthropic: A Blueprint for AI Governance?

In contrast to the opposition, AI research company Anthropic has publicly come out in favor of SB 53. Anthropic co-founder Jack Clark stated, “We have long said we would prefer a federal standard. But in the absence of that this creates a solid blueprint for AI governance that cannot be ignored.” This perspective suggests that while a federal standard might be ideal, state-level initiatives like SB 53 can serve as valuable models for future regulation, filling a current void in comprehensive AI governance.

This divergence of opinion highlights the ongoing debate within the tech community about the most effective and appropriate ways to govern AI. Some prioritize innovation and fear over-regulation, while others emphasize the urgent need for safeguards to ensure responsible development.

Navigating the Nuances: Key Amendments and Regulatory Tiers

Understanding the details of SB 53 is crucial, especially how it has evolved to address previous concerns. Politico reports a significant amendment: companies developing “frontier” AI models that generate less than $500 million in annual revenue will only need to disclose high-level safety details. In contrast, companies exceeding that revenue threshold will be required to provide more detailed reports. This tiered approach aims to tailor regulatory burdens based on a company’s size and potential impact, potentially alleviating concerns about stifling smaller innovators while ensuring scrutiny for larger, more influential players.

This amendment reflects an attempt to create a more balanced AI safety regulation, acknowledging that not all AI developers pose the same level of systemic risk. It’s a pragmatic adjustment, potentially making the bill more palatable to a wider range of stakeholders, including Governor Newsom.

Comparison: Newsom’s Vetoed Bill vs. SB 53

| Feature | Previous Vetoed Bill | Current SB 53 |

|---|---|---|

| Scope of Application | Applied stringent standards broadly to large models. | Targets “large AI labs” with transparency requirements. |

| Revenue Tiers | Not explicitly mentioned as a distinguishing factor. | Introduces revenue tiers ($500M) for disclosure levels. |

| Specific Provisions | Less detailed on specific safety protocols and compute access. | Explicitly includes transparency protocols, whistleblower protections, and CalCompute. |

| Influence on Bill | Authored by Wiener, faced Newsom’s broad criticism. | Influenced by Newsom’s expert panel recommendations. |

The Future of AI Governance: A Pivotal Moment for California

The passage of the California AI bill, SB 53, marks a pivotal moment in the ongoing global discussion about AI governance. Whether Governor Newsom signs or vetoes it, the debate it has ignited underscores the urgent need for clear and effective frameworks to manage the power of AI. This legislation, and the reactions to it, offer valuable insights into the complexities of balancing innovation, safety, and economic impact.

For the broader tech and cryptocurrency communities, this legislative effort highlights a growing trend: governments are actively seeking to understand and regulate emerging technologies. The outcome in California could influence how other jurisdictions approach AI, shaping the future landscape of technological development and its ethical implications.

Conclusion: The Unfolding Impact of SB 53

As SB 53 makes its way to Governor Newsom’s desk, the tech world watches with bated breath. This AI safety regulation is more than just a piece of state legislation; it’s a test case for how democracies grapple with the profound challenges and opportunities presented by artificial intelligence. The debate between fostering innovation and ensuring public safety is at its core, with industry giants and advocates for responsible AI development offering contrasting visions. The final decision by Gavin Newsom AI policy will undoubtedly have a lasting impact, not just on California, but potentially on the global conversation around tech policy AI for years to come.

To learn more about the latest AI market trends, explore our article on key developments shaping AI models features.

This post California AI Bill: Crucial SB 53 Faces Uncertain Veto from Newsom first appeared on BitcoinWorld.

You May Also Like

CEO Sandeep Nailwal Shared Highlights About RWA on Polygon

Velo protocol Integrates SumPlus to Power AI-Driven Finance