Shocking Truth: Kim Kardashian Blames ChatGPT Frenemy for Failed Law Exams

BitcoinWorld

Shocking Truth: Kim Kardashian Blames ChatGPT Frenemy for Failed Law Exams

When reality TV royalty meets artificial intelligence, the results can be surprisingly human. Kim Kardashian’s recent confession about her toxic relationship with ChatGPT reveals how even celebrities struggle with AI limitations. In a stunning revelation, the media mogul admitted that relying on the popular AI tool actually caused her to fail law exams.

Why Kim Kardashian Calls ChatGPT Her Frenemy

During a candid Vanity Fair interview, Kim Kardashian opened up about her complicated dynamic with artificial intelligence. I use ChatGPT for legal advice, so when I am needing to know the answer to a question, I will take a picture and snap it and put it in there, she revealed. The surprising twist? They’re always wrong. It has made me fail tests.

The Dangerous Reality of AI Hallucinations

What Kardashian experienced firsthand are classic AI hallucinations – instances where large language models generate convincing but completely fabricated information. This phenomenon occurs because:

- ChatGPT isn’t programmed to distinguish factual accuracy

- The system predicts likely responses based on training data

- Confidence often masks incorrect information

- Legal terminology can trigger sophisticated but false answers

When ChatGPT Fails Law Exams

Kardashian’s experience highlights a growing concern in professional circles. She’s not alone in facing consequences from AI misinformation. Several lawyers have faced sanctions for using ChatGPT in legal briefs that cited non-existent cases. The table below shows key areas where AI hallucinations pose serious risks:

| Professional Field | Risk Level | Real Consequences |

|---|---|---|

| Legal Practice | High | Bar sanctions, malpractice claims |

| Academic Research | Medium-High | Failed exams, academic penalties |

| Medical Information | Critical | Misdiagnosis, treatment errors |

| Financial Advice | High | Regulatory violations, financial losses |

Celebrity AI Use Goes Wrong

Kardashian’s approach to dealing with ChatGPT’s failures reveals how even tech-savvy users anthropomorphize AI. I will talk to it and say, ‘Hey, you’re going to make me fail, how does that make you feel that you need to really know these answers?’ she admitted. The AI’s response? This is just teaching you to trust your own instincts.

The Human Cost of AI Dependence

Despite knowing ChatGPT lacks emotions, Kardashian finds herself emotionally invested. I screenshot all the time and send it to my group chat, like, ‘Can you believe this b—- is talking to me like this?’ This behavior demonstrates how users develop real emotional responses to AI interactions, even when intellectually understanding the technology’s limitations.

Key Takeaways for AI Users

Kim Kardashian’s experience offers valuable lessons for anyone using AI tools:

- Always verify AI-generated information with reliable sources

- Understand that confident responses don’t guarantee accuracy

- Recognize AI’s limitations in specialized fields like law

- Maintain critical thinking when using AI assistance

The Kardashian-ChatGPT saga serves as a powerful reminder that while AI can be a valuable tool, blind trust can lead to significant consequences. As artificial intelligence becomes increasingly integrated into our daily lives, maintaining healthy skepticism and verification practices remains crucial.

Frequently Asked Questions

What are AI hallucinations?

AI hallucinations occur when language models generate plausible but factually incorrect information, often presenting it with high confidence.

Has Kim Kardashian actually failed law exams because of ChatGPT?

Yes, according to her Vanity Fair interview, Kim Kardashian specifically stated that ChatGPT provided wrong information that contributed to her failing law examinations.

Are other professionals experiencing similar issues with ChatGPT?

Yes, several lawyers have faced professional sanctions for using ChatGPT-generated content that included citations to non-existent legal cases.

Can ChatGPT actually understand or have emotions?

No, ChatGPT and similar AI models don’t possess consciousness, understanding, or emotions. They generate responses based on patterns in training data.

What should users do to avoid AI misinformation?

Users should always verify AI-generated information through reliable sources, particularly for important decisions in specialized fields like law, medicine, or finance.

To learn more about the latest AI trends and celebrity technology adoption, explore our article on key developments shaping artificial intelligence integration in mainstream culture.

This post Shocking Truth: Kim Kardashian Blames ChatGPT Frenemy for Failed Law Exams first appeared on BitcoinWorld.

You May Also Like

Japanese Tech Giant’s Ambitious Bitcoin Accumulation

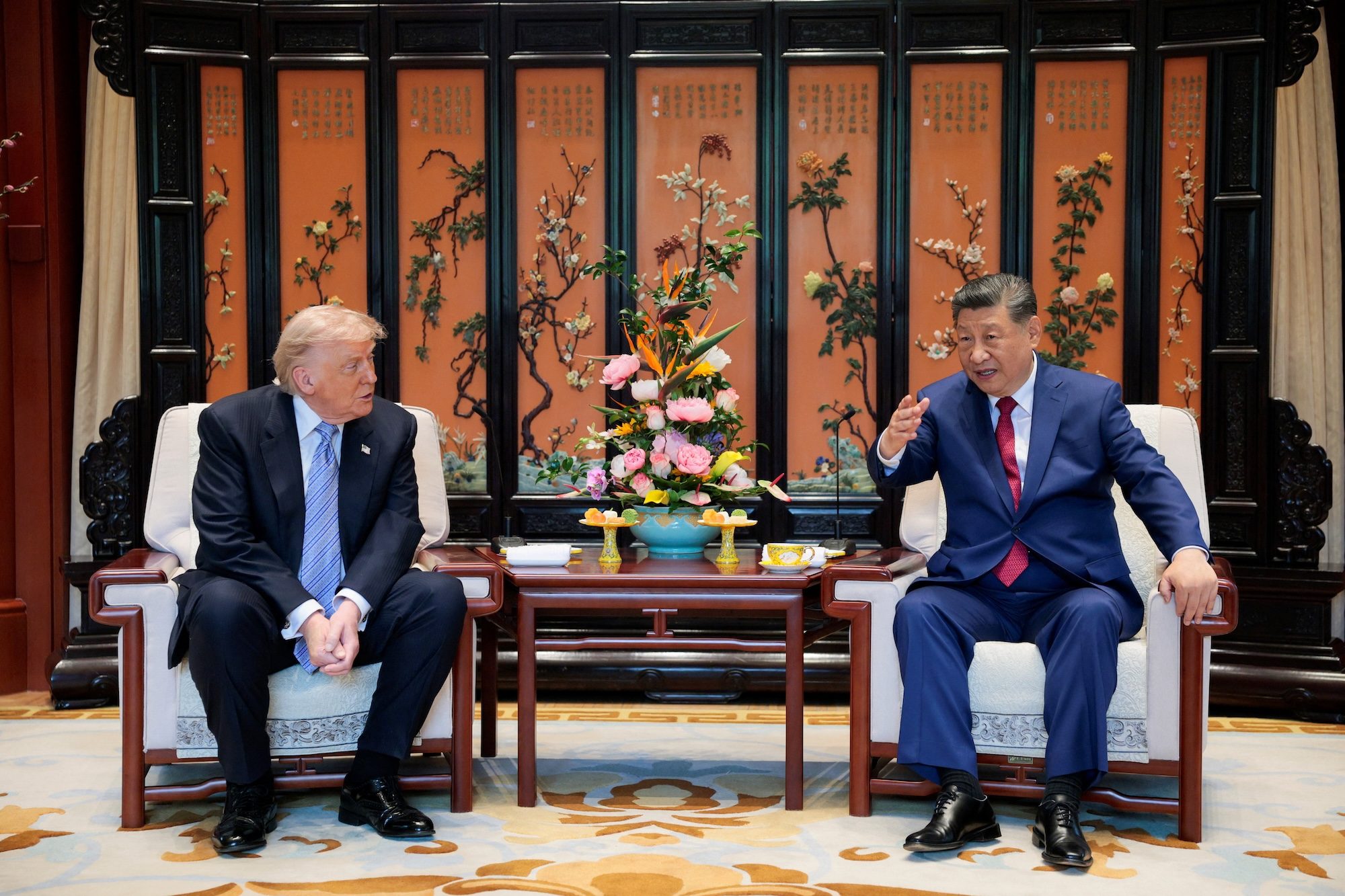

Trump says he and China’s Xi agree Iran cannot have nuclear weapons