Uber Joins Nebius to Boost Driverless Expansion in U.S. and Beyond

TLDRs;

- Uber and cloud firm Nebius plan to invest up to $375 million in autonomous vehicle unit Avride.

- The deal supports expanding Avride’s driverless fleet to 500 cars and accelerating its international growth.

- Avride’s first driverless fleet, the Hyundai Ioniq 5 EVs, will debut in Dallas by late 2025, pending approvals.

- The investment deepens Uber’s push into self-driving mobility while raising new infrastructure and regulatory challenges.

Uber has announced a major step toward expanding its autonomous transportation footprint through a partnership with Nebius Group, a Dutch cloud technology company.

Together, the two firms plan to invest up to US$375 million in Avride, Nebius’s autonomous vehicle subsidiary based in Austin, Texas.

The funding package includes strategic investments and commercial commitments, with the potential for more capital if Avride hits specific development milestones. The collaboration underscores Uber’s continued drive toward autonomous ride-hailing and delivery services, which could reshape logistics and mobility across major cities.

According to the companies, the investment will help expand Avride’s driverless fleet to 500 vehicles while accelerating its product development and global reach. This move marks Uber’s first investment from an external partner structured as a convertible note, allowing the company to convert the financing into equity at a later stage.

Despite the partnership, Nebius will retain full ownership of Avride, emphasizing that the deal represents a cooperative investment rather than an acquisition.

Dallas Pilot Signals Next Frontier

Avride plans to roll out its first fully autonomous fleet in Dallas, Texas, by the end of 2025. The fleet will consist of Hyundai Ioniq 5 electric vehicles, each equipped with Avride’s proprietary self-driving software and sensor systems.

Uber has already partnered with Avride in several U.S. cities to test driverless rides and delivery robots, though these programs remain limited in scale. The Dallas launch will serve as a key testbed for assessing the company’s readiness to operate without human safety drivers in complex urban environments.

However, regulatory approval remains a hurdle. The National Highway Traffic Safety Administration (NHTSA) requires autonomous vehicle developers to report crashes and incidents, but no formal performance benchmarks yet exist. The National Transportation Safety Board (NTSB) continues to review automation-related accidents, emphasizing that fully driverless systems must meet strict safety conditions before deployment.

Without official state and federal approval, Avride’s 500-car expansion plan will likely depend on evolving testing and certification standards within Texas.

Infrastructure Challenges and Charging Expansion

A fleet of 500 electric Hyundai Ioniq 5s will require substantial charging infrastructure to support daily operations. Energy providers and developers in Texas are already positioning themselves to capture this demand.

Utility companies such as Oncor, Dallas’s main transmission and distribution operator, have begun expanding capacity to accommodate transportation electrification. Local utilities are also offering commercial charging rebates, including up to $5,000 for DC fast-charging installations from Austin Energy and up to $1,500 from Entergy, which serves parts of Texas and the Gulf region.

Texas currently hosts roughly 835 DC fast-charging stations, most located in major urban areas. Experts say fleet charging operators could gain a competitive edge by establishing dedicated depots near Avride’s early service zones before other networks expand.

Global Vision and Industry Implications

For Uber, the Nebius partnership represents more than just an investment, it’s a strategic bet on the future of driverless mobility. By combining Nebius’s cloud infrastructure expertise with Avride’s AI-driven vehicle systems, Uber aims to integrate autonomous ride-hailing and delivery operations more deeply into its platform.

Industry analysts view the deal as part of a broader trend in which technology and transportation companies are joining forces to commercialize autonomous fleets. While challenges remain, the partnership signals strong confidence in the long-term potential of AI-driven transportation ecosystems.

As the Dallas pilot takes shape, Uber’s collaboration with Nebius could become a model for how traditional mobility platforms evolve into autonomous, electrified, and globally scalable networks.

The post Uber Joins Nebius to Boost Driverless Expansion in U.S. and Beyond appeared first on CoinCentral.

You May Also Like

Bitcoin World Reveals Top 5 Stunning Gainers And Losers

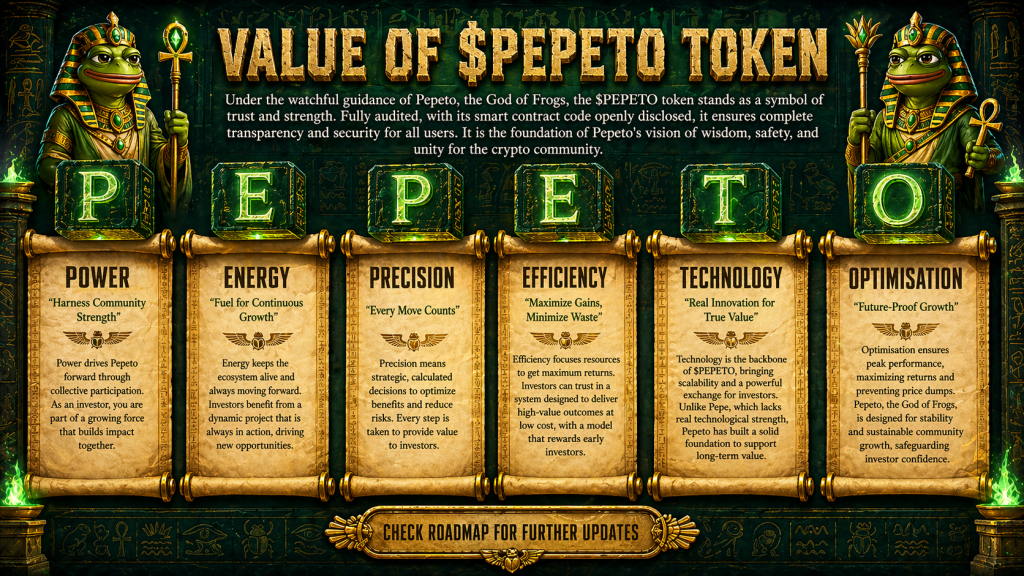

Crypto News: Morgan Stanley Says Banks Could Hold Bitcoin as DOGE and Chainlink (LINK) Rally While Pepeto Follows the Same Entry Pattern